Carol Todd files lawsuit against Meta, TikTok and other social media platforms; accuses companies of targeting and exploiting youth

Warning: Disturbing content

Twelve years after the death of her daughter, Carol Todd filed a lawsuit that aims to protect young people from social media platforms which, she argues, “exploit children and adolescents.”

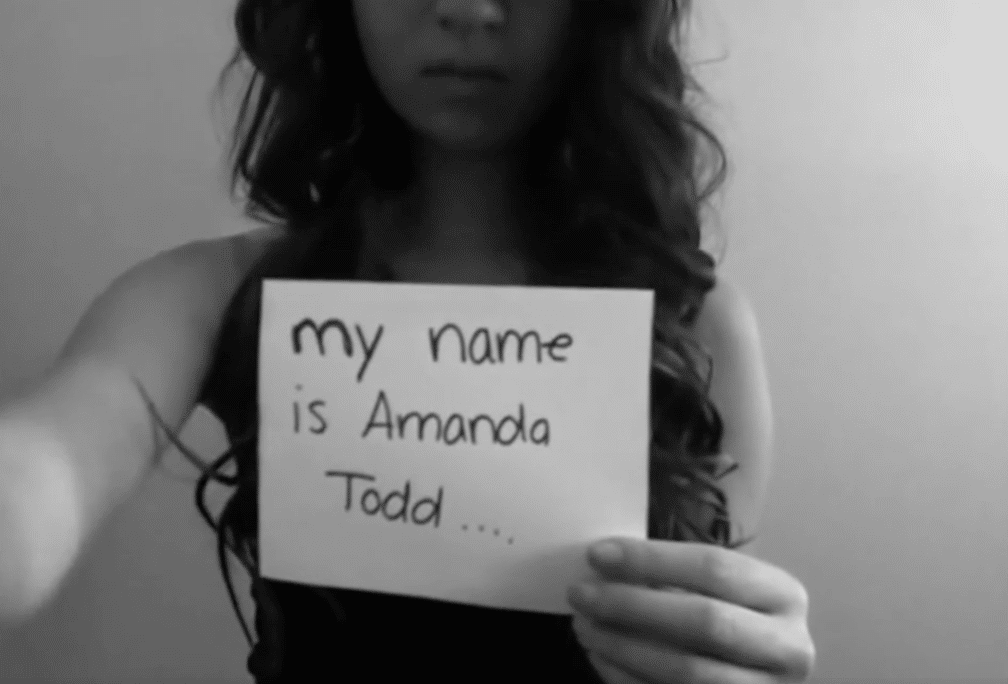

Port Coquitlam youth Amanda Todd died by suicide after suffering extensive online sextortion and harassment. Amanda was 15.

Local news that matters to you

No one covers the Tri-Cities like we do. But we need your help to keep our community journalism sustainable.

Filed in the Superior Court of California County of Los Angeles on Oct. 10, the lawsuit targets Meta – formerly Facebook – as well as Snap, TikTok, Google, YouTube, and Discord.

Carol Todd is joined in the suit by 10 families, each of whom reported their children using social media platforms and subsequently suffering from depression and anxiety. In some cases teens were harassed and sextorted.

Speaking to CBC, Google contended the lawsuit’s allegations aren’t true. A spokesperson for the company explained its services are built with parental controls and provide age-appropriate experiences.

Meta

The lawsuit alleges the companies all marketed their products to children, quoting an internal email written sent from a Meta product designer which stated: “the young ones are the best ones. You want to bring people to your service young and early.”

The lawsuit describes the social media giant as considering – but not implementing – default safety settings for teens that would protect children from unwanted interactions.

“Once again, Meta leadership chose not to implement these types of simple changes, despite its knowledge of preventable harms to children,” the lawsuit stated.

Facebook’s lack of reasonable safety features allowed Aydin Coban, who was eventually found guilty of five counts related to Amanda Todd’s online sextortion, to get information about Todd’s friends and family.

“Meta further allowed this Facebook predator to open more then 20 different Facebook accounts,” the lawsuit stated.

Coban sent a topless of photo of Amanda: “to everyone she knew and even uploaded it to Meta and used it as his profile picture on several new Facebook accounts.”

Nearly a decade after Amanda’s death, Ohio teenager Braden Markus died by suicide shortly after being contacted on Instagram. After eventually agreeing to exchange compromising photos on Google Hangouts, Markus was sextorted.

“There is no conceivable reason why Meta could not have fixed these defects in the more than ten years between Amanda’s death and Braden’s use of its Instagram product,” the lawsuit stated.

Rewarding ‘extreme usage’

The social media platforms were each designed to affect “the same neurological pleasure circuitry as is involved in addiction to nicotine, alcohol, or cocaine,” according to the lawsuit.

The companies kept young people online and engaged by employing several techniques. Those techniques include incessant notifications, cultivating an “endless feed to keep users scrolling,” intermittent rewards to trigger dopamine and “’trophies’ to reward extreme usage,” the lawsuit stated.

Meta could slow its content recommendation algorithm at night and likely help many young users get to sleep. Instead, the product is made to, “manipulate children into doom scrolling for hours, and during times they are supposed to be sleeping or in school.”

The lawsuit quotes a New Yorker article featuring an interview with Harvard University co-director of the Center for Law, Brain & Behavior Judith Edersheim.

“No other addictive device has ever been so pervasive,” Edersheim told the New Yorker.

The lawsuit also quotes Facebook founding president Sean Parker, who said that, despite the risk they were taking with children’s brains, the company designed its platform to: “consume as much of your time and conscious attention as possible.”

The companies undertook: “calculated and extensive efforts to deceive consumers about the risks” of their respective platforms, acting with: “willful disregard for human life,” the lawsuit stated.

Snap

One of the initial uses of Snapchat, the multimedia messaging app, was to serve as a sexting tool, the suit charges.

Despite the likelihood of misuse, the app was marketed to children and teens.

“Snap’s founders did not decide this because they believed that their product was in any way appropriate or safe for children and teens; but rather, because it was a path to riches,” the lawsuit stated.

While there is a tipline for complaints about content ranging from bullying to human trafficking, deactivated accounts are quickly reconstituted with the same picture and a similar name, according to a former Drug Enforcement Agency special agent quoted in the lawsuit.

TikTok

Companies like TikTok and Google know when dangerous challenges such as blackout challenge are amplified and targeted at children, according to the lawsuit.

But while there are internal discussions about dangerous trends, the companies tend to take a wait-and-see approach, according to the lawsuit, “even when they know that such decision may result in the death of children.”

YouTube

The video sharing platform utilizes algorithmic recommendations that reinforce political biases and sometimes lead to radicalization, according to the suit.

The suit leans on a study by the Anti-Defamation League which found: “exposure to alternative YouTube channels can serve as gateways to extremist or white supremacist channels.”

Researchers at the University of California Davis also concluded that YouTube directed users – especially right-leaning users – to, “ideologically biased and increasingly radical content.”

Discord

An instant messaging app and social platform, Discord leaves teens vulnerable to receiving friend invitations from anyone in the same server, the lawsuit stated.

“Discord in particular is popular for communities of neo-Nazis and white supremacists to socialize, share hateful memes,” the lawsuit stated, adding that the 2017 “Unite the Right” rally in Charlottesville, Virginia was largely planned on Discord.

The lawsuit quotes from a Slate article which identified Discord as: “an ideal place for far-right recruitment.”

“If you hang out with Nazis and racists long enough, what begins as cruel humor can give way to a set of convictions,” the Slate article stated.

The plaintiffs are requesting punitive and compensatory damages.

The lawsuit was launched in California because each of the companies have places of business in California and are: “essentially at home in this state,” the suit stated.

None of the charges have been proven in court.